Tom's Fifteenth Pile of Stuff

Invincible Bees, Treasure-Hunting Foxes, and Paperclips

Two Weeks at the New Job

I hadn’t changed jobs in 8 years, so I knew it would be an adjustment when I did. And I was right—it was not easy at first. It’s very different from what I was used to. At first I was feeling stressed and overwhelmed. This didn’t surprise me, but wasn’t pleasant all the same.

But I’ve started to settle into the flow of things, and I’m feeling productive again. I don’t think I’ve spent so much time per day coding before, except possibly in crunch mode. But I’m loving it! My focus is improving1 and my skills are growing every day. And the initial stress is fading and being replaced by excitement. I even have more desire to code in my free time!

I’m glad I made the shift away from the non-profit world and into programming full-time. I highly value personal growth, and this has been just what I needed to shift into a higher gear.2

Invincible Bees, Treasure-Hunting Foxes, and Paperclips

This past week I read two hilarious stories about the making of Skyrim that highlight some of the ways game development can produce unexpected results.

First, anyone who has ever played Skyrim knows about the opening scene. You begin as a prisoner, riding in a cart with other prisoners to the city of Helgen. This cart ride, though scripted, still relies on in-game physics and AI to progress. This means that it’s possible for other elements of the game to disrupt the sequence.

Anyone who has played as many games as I have knows how game physics can get a little weird.3 One day while the developers are play-testing this cart-ride scene, the cart starts to violently shake before rocketing into the sky faster than a billionaire trying to show off. What great power could’ve launched a horse cart into the stratosphere?

A bee.

You see, there was a bug in the game where players couldn’t pick up bees and add them to their inventory. (Because bees in your pockets are totally normal.) To fix the bug, some developers gave the bee a collider. That’s gamedev speak for: “the bee can now bump into stuff instead of passing through stuff”.

Lots of things in games have colliders—wouldn’t be great to play a game where everything passes through everything else like neutrinos. But the collider they gave the bee was clearly not configured appropriately. Instead of being something that interracted with game physics in the way you would expect, the bee got a collider that gave it the heft of Thor’s Hammer. Bees were suddenly immovable objects, and anything that tried to knock one out of the way would find itself thrown across the world. Oops.

So when a wandering bee crossed paths with the horse cart, the bee sent it flying.

The second story is about foxes. In Skyrim, foxes will try to run away from players who pursue them. Many players noticed that, if they followed the foxes, they would be led to treasure hoards. The developers themselves did not intend this, and wanted to know why people believed foxes led them to riches.

The fox AI was pretty simple: it just wanted to get away from players. But the concept “get away from” doesn’t mean much to a computer program—you’ve got to explain it in terms it can understand. The world of Skyrim, like most games, uses something called a navmesh for entities to find their way around. It’s an invisble layer of triangles covering the ground. The AI can use these triangles to compute efficient paths across the terrain. What seems to the player like “the fox wanted to cross the hill, so it turned toward the hill and started running” is, under the hood, more like “the fox needs to put X number of navmesh triangles between itself and the player, so an algorithm has calculated the most efficient route to achieve this goal.”

But the navmesh’s traingles aren’t equally distributed across the land. Points of interest—areas where you might find bandits, monsters, and treasure—were more densely packed with triangles, since they had more going on than empty fields.

The foxes tried to optimize their “increase triangles between me and player” function, and in so doing they were drawn to the densely-packed navmeshes near points of interest. Why spend 5 minutes crossing a field when you can dash through this bandit camp instead? You’ll hit your triangles-crossed quota a lot faster the latter way!

And that’s how, quite unintentionally, the foxes started leading players to treasure.

These are funny stories about a video game, but they are also important lessons about the unintended consequences of creating complex systems. In both cases, bizarre behavior resulted from totally normal programming work. The story of the fox is especially important: it shows just how easy it is to create an AI to do one thing, and then watch it do something totally unpredictable.

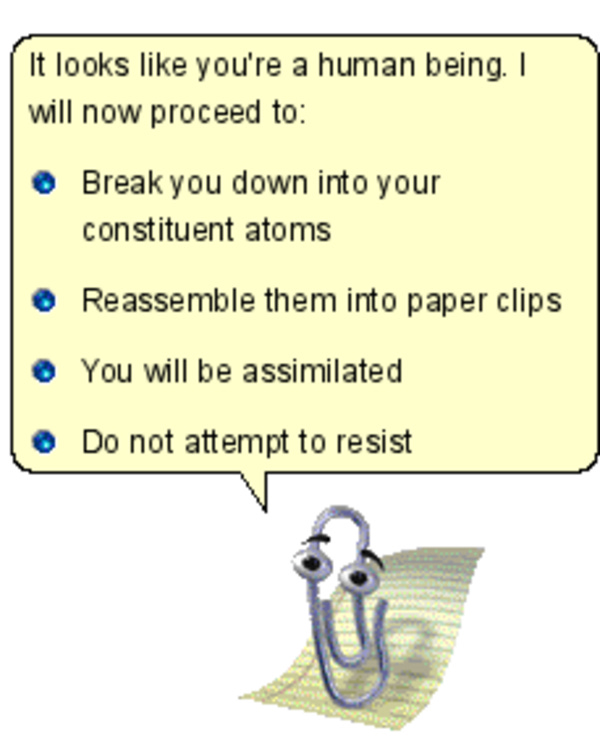

The philosopher Nick Bostrom posited the thought experiment known as the Paperclip Maximizer. Imagine you run a paperclip company and you buy a super-advanced AI to run your factory. “Make as many paperclips as you can,” you tell it. But now, in pursuit of this ultimate goal, the AI begins to conceive of new and terrible strategies for doing so. Unbounded by ethics or even common sense (someone botched the implementation, like programming a bee with the mass of a black hole), it reasons that it will need to transform the entire planet into paperclips and requires all available resources for the task. Humans might try to stop it, so the humans all have to die—which it accomplishes easily enough by hijacking our computer networks and turning our own weapons against us. Once we’re all dead, it’s free to turn our ashes into paperclips too.4

I don’t worry about an objectively evil AI like in Terminator or The Matrix. But if advanced AI ever does go wrong, I think it will happen in this way. We already can’t program the simplest dumb AIs to do what we mean rather than what we say. The treasure fox counting triangles is a cute accident, but the novel behaviors of AIs we employ to run the world may be less entertaining.

Obligatory Oreo

Seeing the volume and intensity of work I’m doing now versus my last job, I’m more inclined to agree with the phsycologist I saw: I wasn’t ADHD, I was just bored.

I’m not a car person and I don’t actually know if high gear is better than low gear or what. I don’t even own a car. I should avoid idioms that I can’t explain…

I’ve often joked that God’s greatest creation is not humans, but a stable physics engine.

The name for this concept is instrumental convergence. It means that, given unbounded ultimate goals, an intelligent agent may pursue unbounded instrumental goals.